Phase I: Findings

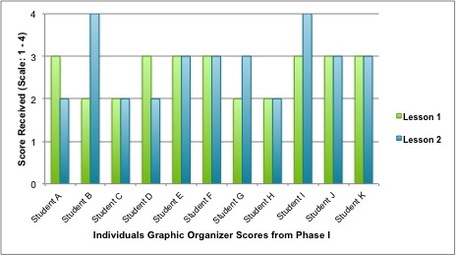

To better measure the effectiveness of my Phase I lessons on my students’ learning, I charted their scores from the graphic organizers to see how they (Figures 12 and 13).

This data is most telling when considered in light of my observation notes of the students during the lessons. Overall, there was a very slight increase in the scores on the graphic organizer sheets from Lesson 1 to Lesson 2 (0.17 points, or about 23.5%; Figure 13). Three students (27%) showed improvement in their scores, yet these students admitted to either not using the reading response procedure or not really benefitting from it. It seems that it was not the procedure itself that was directly beneficial, but rather the fact that they had more time to work through the questions in Lesson 2 than they did in Lesson 1. These students were able to take advantage of the additional time provided in Lesson 2 to read and answer the questions, and therefore received better scores. The other students either received the same or lower scores, regardless of whether they viewed the reading response procedure as helpful or not.

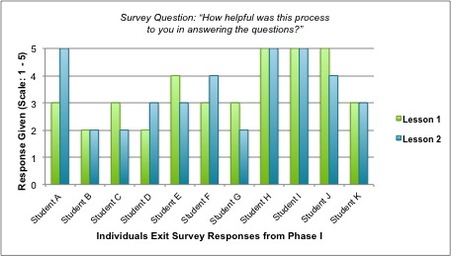

In addition to the graphic organizer scores, I also charted the students’ exit survey responses. The survey asked, “On a scale of one to five, how helpful was this process for you in answering the questions?” Students submitted their responses on sticky notes that included their names for tracking changes from one lesson to the next. The survey responses from both lessons provided an additional look at how, in the students’ view, the Phase I interventions had helped them.

Overall, the students’ responses from Lesson 1 spanned from somewhat positive to very positive, with most being in the three to five range (see Figure 14). The average response remained the same for both lessons, at 3.45.

In addition to the graphic organizer scores, I also charted the students’ exit survey responses. The survey asked, “On a scale of one to five, how helpful was this process for you in answering the questions?” Students submitted their responses on sticky notes that included their names for tracking changes from one lesson to the next. The survey responses from both lessons provided an additional look at how, in the students’ view, the Phase I interventions had helped them.

Overall, the students’ responses from Lesson 1 spanned from somewhat positive to very positive, with most being in the three to five range (see Figure 14). The average response remained the same for both lessons, at 3.45.

Again, this data is most meaningful when considered alongside the graphic organizers and my observation notes. Only two students (18%) gave a survey response of 2. One of these students said she was comfortable and confident in her current reading habits. The other student consistently rushed through the assignment and missed information in both the directions and the reading passage itself. The remainder of the students gave a 3 or above, and I thought this was a fairly good starting point considering how new this process was to them.

Unfortunately, the exit survey responses from Lesson 2 were less favorable. Although the students were familiar with the question types and the procedure when they got to Lesson 2, and despite the fact that I attempted to incorporate their feedback from the previous lesson, most were not engaged. Even in the process of collecting the exit survey, four of the 11 students commented that they did not even follow the reading response procedure. Another student verbally replied, “-1,000” to my question, despite marking a “5” on the exit slip. As a result, while Figure 14 shows improvement in three student responses between Lesson 1 to Lesson 2, only one of these students – 9% of the class – appeared to genuinely adopt and benefit from the reading response procedure.

In doing a comprehensive review of the data I collected in Phase I through the graphic organizers, the exit surveys, and my own observation notes, I was able to identify two key themes in my findings.

Theme 1: Requiring evidence-supported responses helped students self-monitor their comprehension. I noticed a positive trend in how my students monitored their own answers when they had to cite evidence from the text. In both Lessons 1 and 2, I observed many students rushing to answer the questions without first looking back at what they had just read. As a result, they made several minor mistakes, such as forgetting details or drawing their own conclusions about the story. However, when the students diligently looked back in the story to check their answer, they caught their own mistakes and were able to self-correct before I pointed it out to them. So, while the reading response procedure as a whole may have been boring or burdensome to the class, citing evidence from the text for their answers assisted the students in establishing better self-monitoring behavior.

Theme 2: More student dialogue could lead to stronger, more complete responses. I discovered how valuable discussion might be for the students. Unfortunately, this realization came about through negative rather than positive trends; I did not plan for or implement nearly enough discussion in Phase I, and this omission led to additional struggles for both my students and me. However, when I compared my notes with the students’ graphic organizer sheets, I noticed that the students who were chatting about the story itself actually provided better, more complete answers. Also, in reflecting back on Phase I as a whole, I realized that the students would have probably been more engaged had they been allowed to discuss the story and their answers with their peer during the reading process, not just after answering the questions as I had tried to do in Lesson 2. It is a reasonable possibility that incorporating dialogue throughout the lesson could have yielded more correct or more complete answers, since students would have had a better opportunity to see their own errors by listening to their peers.

Unfortunately, the exit survey responses from Lesson 2 were less favorable. Although the students were familiar with the question types and the procedure when they got to Lesson 2, and despite the fact that I attempted to incorporate their feedback from the previous lesson, most were not engaged. Even in the process of collecting the exit survey, four of the 11 students commented that they did not even follow the reading response procedure. Another student verbally replied, “-1,000” to my question, despite marking a “5” on the exit slip. As a result, while Figure 14 shows improvement in three student responses between Lesson 1 to Lesson 2, only one of these students – 9% of the class – appeared to genuinely adopt and benefit from the reading response procedure.

In doing a comprehensive review of the data I collected in Phase I through the graphic organizers, the exit surveys, and my own observation notes, I was able to identify two key themes in my findings.

Theme 1: Requiring evidence-supported responses helped students self-monitor their comprehension. I noticed a positive trend in how my students monitored their own answers when they had to cite evidence from the text. In both Lessons 1 and 2, I observed many students rushing to answer the questions without first looking back at what they had just read. As a result, they made several minor mistakes, such as forgetting details or drawing their own conclusions about the story. However, when the students diligently looked back in the story to check their answer, they caught their own mistakes and were able to self-correct before I pointed it out to them. So, while the reading response procedure as a whole may have been boring or burdensome to the class, citing evidence from the text for their answers assisted the students in establishing better self-monitoring behavior.

Theme 2: More student dialogue could lead to stronger, more complete responses. I discovered how valuable discussion might be for the students. Unfortunately, this realization came about through negative rather than positive trends; I did not plan for or implement nearly enough discussion in Phase I, and this omission led to additional struggles for both my students and me. However, when I compared my notes with the students’ graphic organizer sheets, I noticed that the students who were chatting about the story itself actually provided better, more complete answers. Also, in reflecting back on Phase I as a whole, I realized that the students would have probably been more engaged had they been allowed to discuss the story and their answers with their peer during the reading process, not just after answering the questions as I had tried to do in Lesson 2. It is a reasonable possibility that incorporating dialogue throughout the lesson could have yielded more correct or more complete answers, since students would have had a better opportunity to see their own errors by listening to their peers.